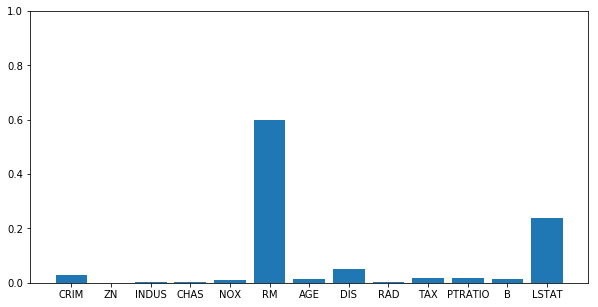

Train the model using RandomForestClassifier.Sklearn wine data set is used for illustration purpose. It collects the feature importance values so that the same can be accessed via the feature_importances_ attribute after fitting the RandomForestClassifier model. Sklearn RandomForestClassifier can be used for determining feature importance. This is irrespective of the fact whether the data is linear or non-linear (linearly inseparable) Sklearn RandomForestClassifier for Feature Importance Using Random forest algorithm, the feature importance can be measured as the average impurity decrease computed from all decision trees in the forest. Random Forest for Feature Importanceįeature importance can be measured using a number of different techniques, but one of the most popular is the random forest classifier. Feature importance values can also be negative, which indicates that the feature is actually harmful to the model performance. Note that the selection of key features results in models requiring optimal computational complexity while ensuring reduced generalization error as a result of noise introduced by less important features.įeature importance can be measured on a scale from 0 to 1, with 0 indicating that the feature has no importance and 1 indicating that the feature is absolutely essential. One can apply feature selection and feature importance techniques to select the most important features. Once the importance of features get determined, the features can be selected appropriately. The outcome of feature importance stage is a set of features along with the measure of their importance. Alternatively, if a feature is consistently ranked as unimportant, we may want to question whether that feature is truly relevant for predicting the target variable.įeature importance is used to select features for building models, debugging models, and understanding the data. For instance, if a highly important feature is missing from our training data, we may want to go back and collect that data. Feature importance can also help us to identify potential problems with our data or our modeling approach. This, in turn, can help us to simplify our models and make them more interpretable. Why is feature importance important? Because it can help us to understand which features are most important to our model and which ones we can safely ignore. Feature importance can be calculated in a number of ways, but all methods typically rely on calculating some sort of score that measures how often a feature is used in the model and how much it contributes to the overall predictions. Determining feature importance is one of the key steps of machine learning model development pipeline. In other words, it tells us which features are most predictive of the target variable. Train the model using Sklearn RandomForestClassifierįeature importance is a key concept in machine learning that refers to the relative importance of each feature in the training data.Sklearn RandomForestClassifier for Feature Importance.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed